Few days ago I posted about ESX or ESXi network configuration uses 4 physical NIC’s Networking configuration for ESX or ESXi Part 1 – 4NIC on standard switches

Today, second part of the serial, this time ESX(i) host has 6 pNIC’s (1Gbps) on Standard Switches (vSS). From security, Best Practice and my point of view 🙂 6 physical NIC’s is a smallest number. Having 6 NIC’s in a ESX(i) host will supply it with enough bandwidth, physical devices to follow networking Best Practice, security standards (perhaps not for all organizations), failover and gives more flexibility in ESX(i) network design.

Scenario #1 – 6 NIC’s (1Gbps – 3 dual port adapters) – standard Switch for MGMT, vMotion, VM traffic, storage traffic and FT

In our scenario we have to design network for 5 different type of traffic. Each of the traffic has different vLAN ID which will help us to utilize all NIC’s for more than one traffic and optimize

- mgmt – VLANID 10

- vMotion – vLANID 20

- VM traffic – vLANID

- FT -Fault Tolerance – vLAN ID40

- Storage – vLANID 50

| vmnic | port group | state | trunk | vSwitch | pSwitch | ||

| vmnic0 | mgmt\vMotion | active in mgmt \ passive in vMotion | vLAN10/20 | vSwitch1 | pSwitch1 | ||

| vmnic1 | VM traffic | active | no | vSwitch2 | pSwitch1 | ||

| vmnic2 | mgmt\vMotion | active in vMotion \ passive in mgmt | vLAN10/20 | vSwitch1 | pSwitch2 | ||

| vmnic3 | FT\Storage |

|

vLAN40/50 | vSwitch3 | pSwitch1 | ||

| vmnic4 | VM traffic | active | no | vSwitch2 | pSwitch2 | ||

| vmnic5 | FT\Storage | active in Storage \ Passive in FT | vLAN40/50 | vSwitch3 | pSwitch2 |

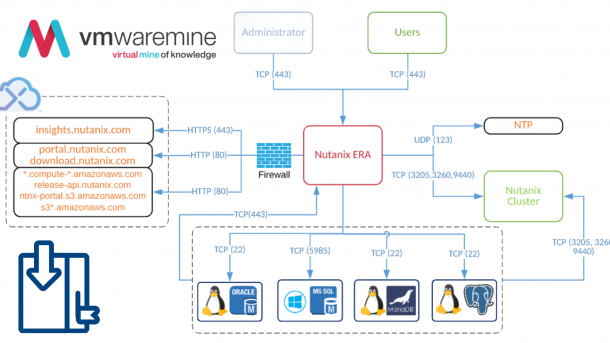

vSwitch0 – as usual in my and not only my design, for management and vMotion traffic. Two vmnics, vmnic0 (from on board NIC) and vmnic2 (from first dual port adapter) are in Active/Passive mode. Active/Passive let us find the road in the middle, compromise between hardware resource which we have (only 6 NICs) and preserve for our environment full security based on hardware and network segmentation (vMotion and mgmt has different hardware and vLANs ID) and only two physical ports are occupied by mgmt network

The vSwitch should be configured as follows:

• Load balancing = route based on the originating virtual port ID (default)

• Failback = no

vSwitch1 – is designated only for VM traffic, two vmnics – vmnic1 (from onboard NIC) and vmnic4 (from third dual port NIC adapter) 1Gbps x 2 reserved only for VM traffic, for 95% cases it’s more than enough.

vSwitch2 – here is a bit more complicated because FT and Storage traffic are very demanding. VMware recommendation for FT and Storage traffic is 10Gbps but I had implemented FT on 1Gbps NIC per server (2 FT enabled VM’s per server). This same is for storage traffic, you have to consider how much traffic you will need, how many VM’s will you have per server, what type of workloads will you have (DB’s. WEB, file servers etc).

In above configuration it’s possible to add even add one more vLAN, for example DMZ. It can be placed in vSwitch2 together with VM traffic. But very common practice is separate DMZ completely (on hardware and software level) from other traffic.

Below diagram show configuration which was implemented many time on many customers so for sure it will work on Your environment too. It is logical diagram where all components and connections between listed.

[box type=”info”] See links below for different networking configuration

ESX and ESXi networking configuration for 4 NICs on standard and distributed switches

ESX and ESXi networking configuration for 6 NICs on standard and distributed switches

ESX and ESXi networking configuration for 10 NICs on standard and distibuted switches

ESX and ESXi networking configuration for 4 x10 Gbps NICs on standard and distributed switches

ESX and ESXi networking configuration for 2 x 10 Gbps NICs on standard and distributed switches[/box]

Greetings Artur, I’m new to VMware and this an excellent article to me as I’ve learned a lot from it. How would configure a physical host with 10 Physical Ports. I’m going to run demanindg Oracle databases on these ESX hosts. 4x built-in 1GB ports 4x 1GB ports from Quad Port Adapter 2x 10GB ports from dual port Adapter for Private iSCSI traffic. This Net exists between the storage systems and ESX servers only. My plan is as follows Vnic0 & Vnic4 for MGMT Vnic1 & Vnic5 for Vmotion Vnic2,3,5,7 (aggregated) for VM traffic Vnic8 & vnic9 (10GB) for iSCSI… Read more »

Hi Artur,

I’m planning to use ESXi 5.0. I’ll build a VMware cluster with 4 Dell physical hosts. Each physical host has 10 Physical network ports and 2 HBA ports for SAN.

Regards

I don’t see any reference to the iSCSI heartbeat vmkernel port. Which port group do you recommend that it be configured on?

Thank you, Artur, this is very useful as it addresses something that I am trying to resolve at present. I hope you won’t mind me asking at this late stage if you could clarify one issue which is why you have only allocated one physical ethernet port to iSCSI (with a standby) yet more to VM network traffic which I would expect to generate less traffic than iSCSI. Is this because there is no benefit to grouping iSCSI connections? To give you a background to my question, we have the beginnings of a larger system with two ESXi 4.1 Essentials… Read more »

Thanks so much Arthur for your great work. I am new to VMware but have strong networking and iSCSI SAN knowledge. I was looking for recommendations around iSCSI networking in vmware and came across your blog. And I immediately subscribed to receive notifications about new posts. Really good articles and appreciate yoru sharing the knowledge. So for iSCSI networking, I am trying to use HP Lefthand P4000 VSA and am simulating it at home lab running on vm workstation with couple of ESXi and VSA VM installed and using CMC to manage it. Since VSA can have two NICs and… Read more »

Hello Arthur,

I have a question on this design where storage and FT configured in active standby,

I hope the VMware best practices is to have 2 iscsi port groups with active and unused configuration , so that each port group will have one active uplinks and other one as unused overall 2 paths will be obtained for redundancy.

as per the design here , will it cause any problem with active standby connetion?.

regards.

Arvinth.